CITE THIS NOTEBOOK: Benchmarking numerical differential equations in Wolfram Language by Hanfeng Zhai. Wolfram Community DEC 12 2022.

CITE THIS NOTEBOOK: Benchmarking numerical differential equations in Wolfram Language by Hanfeng Zhai. Wolfram Community DEC 12 2022.

The Linear Convection Equation

The Linear Convection Equation

The simplest and most accessible equation in computational fluid dynamics (CFD) or more general partial differential equations (PDEs) should be the linear convection equation (LCE), i.e., the wave equation. Solving this equation with finite differences schemes is usually the first step for CFD (or more general applied math) beginners. Here, we demonstrate and compare the simple numerical solution schemes of such equations in Wolfram Language by comparing the Wolfram built-in functions, i.e., DSolve and NDSolve, and finite differences, i.e., with forward differences, backward differences, & central differences. This blog/tutorial mainly serves for educational purposes, for differential equations (DEs) beginners to better understand solutions of ODEs and further PDEs with numerical implementations. Note that the forward differences finite differences implementation are adapted and inspired from the famous Python code 12 Steps to CFD by Prof. Lorena Barba.The linear convection equation(s) write:+c0where c is the parameter to be given, u is the solution, x and t are the spatial and temporal domains, respectively. At , one have the initial condition(s) (ICs): Given this ICs, if we replace the variable as , and we further define ; then the LCE reduces to a simple ODE: 0. We hence have the exact (analytical) solution:

∂u

∂t

∂u

∂x

t0

u(x,0)(x)

u

0

x-ct

y

v(y,t):u(y+ct,t)

dv

dy

u(x,t)(x-ct)

u

0

Now, we solve this DE using the aforementioned methods for benchmarking and a better understanding of numerical PDEs.

Solve LCE using Wolfram Built-in Solver

Solve LCE using Wolfram Built-in Solver

Wolfram Language provides rich libraries to solve differential equations. Analytically and numerically, we can use DSolve and NDSolve to get the solutions. Now we demonstrate the two approaches to obtain the corresponding solutions.

Now, we try to solve the equation symbolically using NDSolve.

First, we can use DSolve to obtain the analytical (or symbolic) solution. Given the constant. We first define the PDE in the symbolic form:

Now, we try to solve the equation symbolically using NDSolve.

First, we can use DSolve to obtain the analytical (or symbolic) solution. Given the constant

c1

In[]:=

c=1;pdewave=D[u[x,t],t]+cD[u[x,t],x]==0

Out[]=

(0,1)

u

(1,0)

u

To get the solution, we simply recall the DSolve module:

In[]:=

solnwave=DSolve[pdewave,u[x,t],{x,t}]

Out[]=

{{u[x,t][t-x]}}

1

We are lucky to some since the 1D linear convection has an analytical solution. However, most of the existing PDEs does not have such a solution. Hence, numerically solve this is essential. We here first demonstrate the method with the built-in function NDSolve. We first define the initial condition (ICs) as a function :

f(x)

In[]:=

f[x_]:=-Sin[5x-Pi/2]

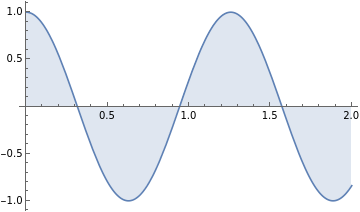

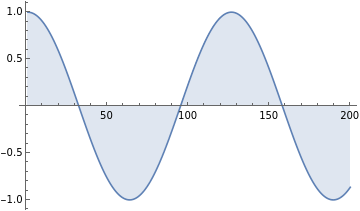

We can also visualize this initial condition:

In[]:=

Plot[-Sin[5x-Pi/2],{x,0,2},FillingAutomatic]

Out[]=

How to interpret this so-called ICs? We can think of the PDE as a "process". Given an initial state, the process will bring "it" (initial state) to "somewhere else", i.e. a final state. The ICS is this initial state, and the PDE, i.e. the wave equation, to take it to a final state to be solved. Numerical discretization can approximate this process.

To get solution, we recall the NDSolve module with also setting the boundary conditions (BCs):

In[]:=

mysol=NDSolve[{D[u[x,t],x]+D[u[x,t],t]==0,u[x,0]==f[x],u[0,t]==1},u[x,t],{x,0,2},{t,0,1}]

Out[]=

u[x,t]InterpolatingFunction[x,t]

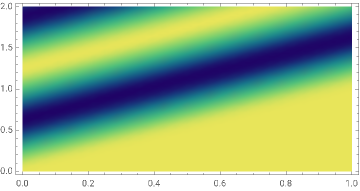

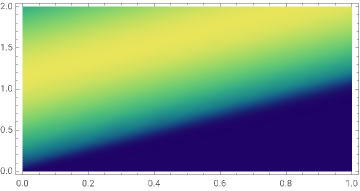

We can then visualize the results in the 3D surface plot:

In[]:=

Plot3D[Evaluate[u[x,t]/.%],{x,0,2},{t,0,1},PlotRangeAll,ColorFunction"BlueGreenYellow"]

Out[]=

In[]:=

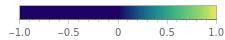

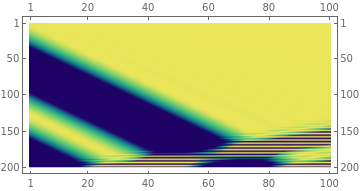

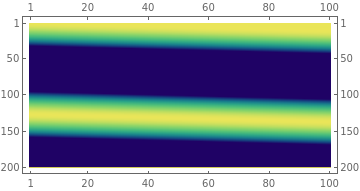

DensityPlot[Evaluate[u[x,t]/.%],{t,0,1},{x,0,2},ColorFunction"BlueGreenYellow",PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Pretty cool, huh! This 3D surface plot visualizes our prementioned "process" - how the solution operator takes the initial state to a final state with regard to both space ( here ) and time ( here ). You may also notice the surface contour looks pretty much like a wave, and that's why it's called the wave equation. Now we can switch some other cool ICs and see how the LCE solutions behave.

x

t

We can also switch some ICs and see how the solution behaves. For example, taking the initial function as

f(x)+1.9-

x

e

10

2

(x-1)

In[]:=

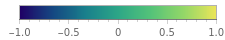

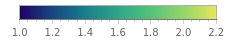

Plot[-(x-1)^2+1.9+Exp[x]/10,{x,0,2},PlotStyleBlue,FillingAutomatic]

Out[]=

Enforce this function as the ICs and solve the wave equation, we can visualize the results as follows:

In[]:=

fic[x_]:=-(x-1)^2+1.9+Exp[x]/10

In[]:=

msol1=NDSolve[{D[u[x,t],x]+D[u[x,t],t]==0,u[x,0]==fic[x],u[0,t]==1},u[x,t],{x,0,2},{t,0,1}];

In[]:=

DensityPlot[Evaluate[u[x,t]/.%],{t,0,1},{x,0,2},ColorFunction"BlueGreenYellow",PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Which shows how the PDE "drives" this single wave to decay outside our solution region.

However, if we increase the order of the function, e.g. switch 2 to 16, we first visualize our ICs:

In[]:=

Plot[-(x-1)^16+1.9+Exp[x]/10,{x,0,2},PlotStyleBlue,FillingAutomatic]

Out[]=

Solving the PDE we can see something interesting happening:

In[]:=

fic[x_]:=-(x-1)^16+1.9+Exp[x]/10;msol2=NDSolve[{D[u[x,t],x]+D[u[x,t],t]==0,u[x,0]==fic[x],u[0,t]==1},u[x,t],{x,0,2},{t,0,1}];

In[]:=

DensityPlot[Evaluate[u[x,t]/.%],{t,0,1},{x,0,2},ColorFunction"BlueGreenYellow",PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Then we observe a little "wavy behavior" of the discretized solution, which should not be expected. Why is this happening? It could be the discretization errors in NDSolve due to its employed discretization methods, i.e. discretization error. There could also be my hardware system problems, i.e., round-off errors. If you are excited about the specific reasons, feel free to read more here. Also, let me know if there are interests in related error analysis and I can further write a blog about this!

In[]:=

$System

Out[]=

Linux x86 (64-bit)

Simple as it is, NDSolve, as we can see, has many limitations. (1) It can not handle complex boundary conditions. As for educational purposes, this is not a big deal. But if you want to employ it to solve knotty engineering problems, robustness are required for complex ICs. (2) It requires prior knowledge about BCs, in which most of the time we don't know. In the LCE case, the BCs of may not be known. So we need methods can be more general. (3) We don't know the underneath code. For a clear-box modeling problem, knowing each steps is essential.

So, now, I will implement different finite difference schemes to solve the LCE and benchmark the solution processes!

Solve LCE using Explicit Numerical Discretization

Solve LCE using Explicit Numerical Discretization

In finite difference methods (FDM), the computational domain is discretized on a rectangular grid to obtain the numerical value on each node, i.e. intersections of the grids. Generally, FDM can be categorized into forward differences (FD), backward differences (BD) and central differences (CD).

Recall the LCE: . Taking forward differences as an example, its solution's partial derivative w.r.t. time and space domain and can be discretized with indices and : and .Now, we will try to solve LCE with the discretization schemes of taking FD for time and compare BD, FD, and CD for spatial discretization benchmarking.

Backward Differences Scheme

Backward Differences Scheme

Now let's begin with backward difference spatial discretization. The discretized form writes:

To implement this, we need to define both and . Solving in the same computational domain as previously implemented in NDSolve. We begin with and .

In[]:=

ClearAll["Global`*"]dx=0.1;

Defining the computational domain:

In[]:=

xl=2;Numpoints=IntegerPart[xl/dx];

Before defining the basis (nominated as xx), we first assign it with a constant array

In[]:=

xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,20}];

In[]:=

xsize=Dimensions[xx];

Defining the ICs, which will be further propagating to a "solution", as usol:

In[]:=

usol=ConstantArray[1,xsize];

Setting up , solution time and constant :

In[]:=

dt=0.01;tfinal=1;c=1;

In[]:=

numt=IntegerPart[tfinal/dt];

Same as we previously defined in NDSolve, we use a sinusoidal function as ICs:

In[]:=

usol=-Sin[5xx-Pi/2];(*usol*)

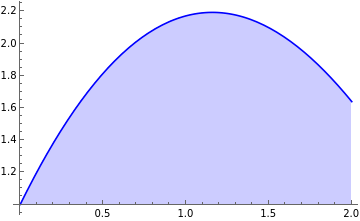

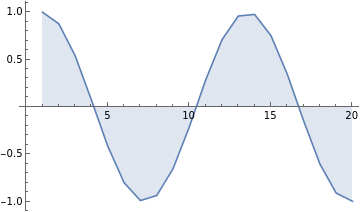

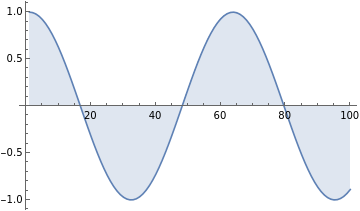

Since we discretize the smooth function on a pretty coarse grid (). The visualization looks kind of rough:

In[]:=

ListLinePlot[usol,FillingAutomatic]

Out[]=

Now, we define a solution matrix, the solution operator, i.e. how the ICs propagate in the spatiotemporal domain is stored in this matrix:

In[]:=

SolMat=ConstantArray[1,{numt,20}];

And we can solve the PDE given our discretization form written in a double for-loops, in which the discretized solution operator is stored in the SolMat:

In[]:=

For[t=1,t≤numt,t++,For[i=2,i≤Numpoints,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*(usol[[i]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]]

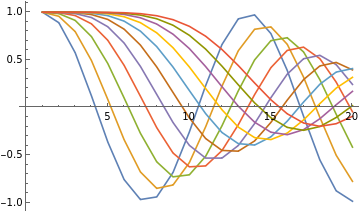

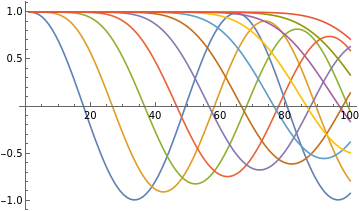

Obtaining the discretized solutions, we can then visualize how to "wave" propagates w.r.t time in the spatial domain. If take 0.1 as the time interval, the visualization shows as follows:

In[]:=

ListLinePlot[{SolMat[[1]],SolMat[[10]],SolMat[[20]],SolMat[[30]],SolMat[[40]],SolMat[[50]],SolMat[[60]],SolMat[[70]],SolMat[[80]],SolMat[[90]],SolMat[[100]]}]

Out[]=

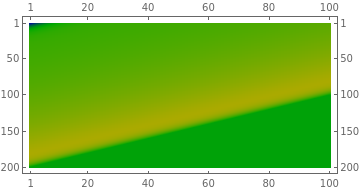

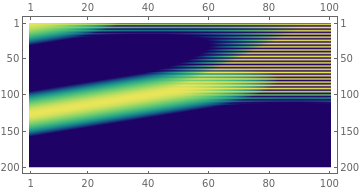

Now, we can visualize the solution operator in the spatiotemporal domain. Note that this figure is visualized as reverse in the spatial domain but essential says the same thing. Comparing this figure with the solution obtained from NDSolve we can observe the coarse spatial discretization effects, considering the visualization from NDSolve is pretty smooth.

In[]:=

MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Of course we can decrease the discretization intervals for better solution approximations:

In[]:=

ClearAll["Global`*"];dx=0.02;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,100}];xsize=Dimensions[xx];dt=0.01;tfinal=1;usol=ConstantArray[1,xsize];usol=-Sin[5xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,100}];c=1;

Visualize our ICs: with

In[]:=

ListLinePlot[usol,FillingAutomatic]

Out[]=

Obtain the solution and plot its changes of spatial distribution w.r.t. time.

In[]:=

For[t=1,t≤100,t++,For[i=2,i≤100,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*(usol[[i]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]];

In[]:=

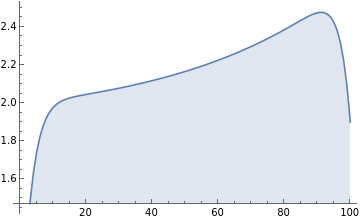

ListLinePlot[{SolMat[[1]],SolMat[[10]],SolMat[[20]],SolMat[[30]],SolMat[[40]],SolMat[[50]],SolMat[[60]],SolMat[[70]],SolMat[[80]],SolMat[[90]],SolMat[[100]]}]

Out[]=

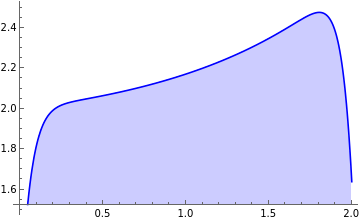

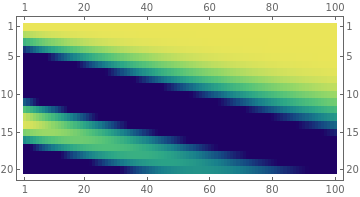

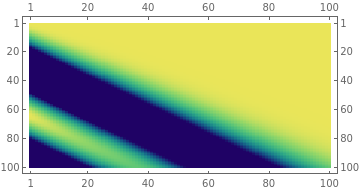

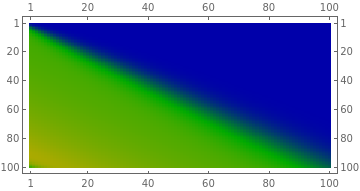

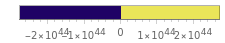

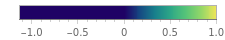

Plot the solution operator projected in the spatiotemporal domain:

In[]:=

MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

In this case,the approximated solutions are much smoother. Comparing this with our previous solutions with NDSolve we can conclude that NDSolve discretization should be more complex than our vanilla BD schemes, with a smooth gradient transition observed.

Since our previous implementations show that NDSolve tend not to obtain a decent solution operator for higher order forms of ICs, we here implement this vanilla BD scheme to test the case. The IC write

In[]:=

uicshighorder=-(xx-1)^16+1.9+Exp[xx]/10;ListLinePlot[uicshighorder,FillingAutomatic]

Out[]=

In[]:=

For[t=1,t≤100,t++,For[i=2,i≤100,i++,uicshighorder[[i]]=uicshighorder[[i]]-(c*dt/dx)*(uicshighorder[[i]]-uicshighorder[[i-1]]);SolMat[[t,i]]=uicshighorder[[i]];]];

We can then visualize how the spatial distribution of the solution evolve w.r.t. time:

In[]:=

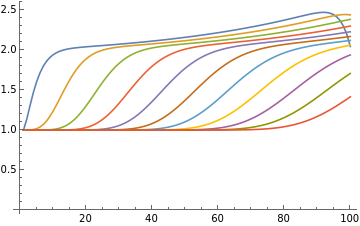

ListLinePlot[{SolMat[[1]],SolMat[[10]],SolMat[[20]],SolMat[[30]],SolMat[[40]],SolMat[[50]],SolMat[[60]],SolMat[[70]],SolMat[[80]],SolMat[[90]],SolMat[[100]]}]

Out[]=

In[]:=

MatrixPlot[Transpose[SolMat],ColorFunction(Blend[{{Min[SolMat],Darker[Blue]},{(Min[SolMat]+Max[SolMat])/2,Darker[Green]},{Max[SolMat],Darker[Yellow]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

And now we don't have the "wavy behavior" in the solution operator of our PDE! The initial wave gradually propagates outside the computational domain.

Another cool thing with the "hands-on" numerical discretization is that we can encode complex ICs for solutions. A good (and classic) example would be the complex square waves:

We first clear the memory space, define the variables, and define our complex initial square waves condition:

In[]:=

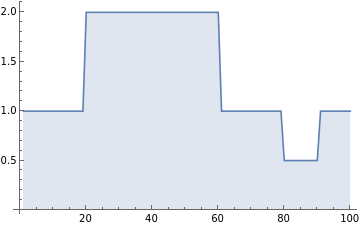

ClearAll["Global`*"];dx=0.02;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,100}];xsize=Dimensions[xx];dt=0.01;tfinal=1;usol=ConstantArray[1,xsize];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,100}];c=1;n1=20;n2=60;n3=80;n4=90;(usol[[n1;;n2]])=ConstantArray[2,Dimensions[usol[[n1;;n2]]]];(usol[[n3;;n4]])=ConstantArray[0.5,Dimensions[usol[[n3;;n4]]]];ListLinePlot[usol,FillingAutomatic]

Out[]=

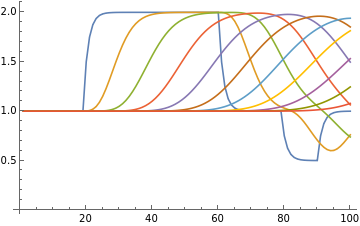

Solving this scenario with our vanilla BD we can then plot both the solution propagation w.r.t. time and its projection in the spatiotemporal domain:

In[]:=

For[t=1,t≤100,t++,For[i=2,i≤100,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*(usol[[i]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]];

In[]:=

ListLinePlot[{SolMat[[1]],SolMat[[10]],SolMat[[20]],SolMat[[30]],SolMat[[40]],SolMat[[50]],SolMat[[60]],SolMat[[70]],SolMat[[80]],SolMat[[90]],SolMat[[100]]}]

Out[]=

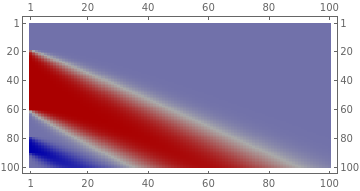

Since this is a special case that specifically employed for our "hands-on" discretization, we use a different cool visualization method:

In[]:=

MatrixPlot[Transpose[SolMat],ColorFunction(Blend[{{Min[SolMat],Darker[Blue]},{(Min[SolMat]+Max[SolMat])/2,Darker[White]},{Max[SolMat],Darker[Red]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Looks pretty cool huh! The hands-on implemented finite difference schemes can give us an approximated solution for the sharp wavy ICs.

Forward Differences Scheme

Forward Differences Scheme

Now, let's just modify our previous backward-difference discretization scheme a little bit, but applying the difference backward we just make it approximate the differentiation by "moving forward":

Let's apply this scheme to our previous employed case used for NDSolve. The implementation is simple:

In[]:=

ClearAll["Global`*"];dx=0.01;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,Numpoints}];xsize=Dimensions[xx];dt=dx;tfinal=1;usol=Sin[xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,Numpoints}];c=1;usol=-Sin[5xx-Pi/2];(*usol=ConstantArray[1,xsize];*)

Double-check it looks the same as our previous ICs; and then approximate use the forward difference scheme:

In[]:=

ListLinePlot[usol,FillingAutomatic]

Out[]=

Discretize and solve the numerical solution:

In[]:=

For[t=1,t≤numt,t++,For[i=2,i≤Numpoints-1,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*(usol[[i+1]]-usol[[i]]);SolMat[[t,i]]=usol[[i]];]];

In[]:=

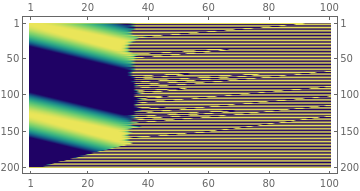

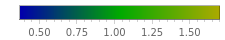

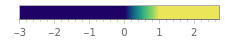

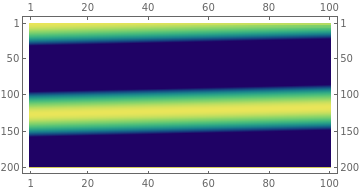

MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Oops! Seems like we did not get the correct solution. One of the primary reason here is that the FD scheme is not stable for the LCE when . The detailed explanation can be checked here. To implement FD for a stable numerical solution, we need to set .

In[]:=

ClearAll["Global`*"];dx=0.01;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,Numpoints}];xsize=Dimensions[xx];dt=dx;tfinal=1;usol=Sin[xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,Numpoints}];c=-1;usol=-Sin[5xx-Pi/2];(*usol=ConstantArray[1,xsize];*)

In[]:=

For[t=1,t≤numt,t++,For[i=2,i≤Numpoints-1,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*(usol[[i+1]]-usol[[i]]);SolMat[[t,i]]=usol[[i]];]];

In[]:=

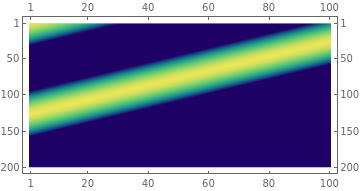

MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

And now the FD successfully obtain the numerical solutions!

Giving the IC as a higher order differential equation, we can also approximate the solution.

In[]:=

uicshighorder=-(xx-1)^16+Exp[xx]/10+1.12;For[t=1,t≤numt,t++,For[i=1,i≤Numpoints-1,i++,uicshighorder[[i]]=uicshighorder[[i]]-(c*dt/dx)*(uicshighorder[[i+1]]-uicshighorder[[i]]);SolMat[[t,i]]=uicshighorder[[i]];]];MatrixPlot[Transpose[SolMat],ColorFunction(Blend[{{Min[SolMat],Darker[Blue]},{(Min[SolMat]+Max[SolMat])/2,Darker[Green]},{Max[SolMat],Darker[Yellow]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

In[]:=

ListLinePlot[{SolMat[[1]],SolMat[[10]],SolMat[[20]],SolMat[[30]],SolMat[[40]],SolMat[[50]],SolMat[[60]],SolMat[[70]],SolMat[[80]],SolMat[[90]],SolMat[[100]]}]

Out[]=

Central Differences Scheme

Central Differences Scheme

Similar to FD and BD, central difference (CD) scheme approximate the spatial solution distribution by using both the adjacent nodes, i.e. and . The discretized form of LCE writes:

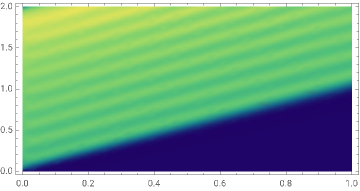

Implement the CD with to the periodic sinusoidal wave we have the following solution:

In[]:=

ClearAll["Global`*"];c=-1;dx=0.01;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,Numpoints}];xsize=Dimensions[xx];dt=dx;tfinal=1;usol=Sin[xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,Numpoints}];usol=-Sin[5xx-Pi/2];For[t=1,t≤numt,t++,For[i=2,i≤Numpoints-1,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*.5*(usol[[i+1]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]];MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

Oops! The unstable solution occurs again. Why...? Because CD is unstable regardless of values. Now let's switch it to a negative value of .

In[]:=

ClearAll["Global`*"];c=1;dx=0.01;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,Numpoints}];xsize=Dimensions[xx];dt=dx;tfinal=1;usol=Sin[xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,Numpoints}];usol=-Sin[5xx-Pi/2];For[t=1,t≤numt,t++,For[i=2,i≤Numpoints-1,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*.5*(usol[[i+1]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]];MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

The cliché happens. So can CD give us a stable solution? Let's now make the absolute value of smaller, i.e. .

In[]:=

ClearAll["Global`*"];c=.1;dx=0.01;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,Numpoints}];xsize=Dimensions[xx];dt=dx;tfinal=1;usol=Sin[xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,Numpoints}];usol=-Sin[5xx-Pi/2];For[t=1,t≤numt,t++,For[i=2,i≤Numpoints-1,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*.5*(usol[[i+1]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]];MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

In[]:=

ClearAll["Global`*"];c=-.1;dx=0.01;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,Numpoints}];xsize=Dimensions[xx];dt=dx;tfinal=1;usol=Sin[xx-Pi/2];numt=IntegerPart[tfinal/dt];SolMat=ConstantArray[1,{numt,Numpoints}];usol=-Sin[5xx-Pi/2];For[t=1,t≤numt,t++,For[i=2,i≤Numpoints-1,i++,usol[[i]]=usol[[i]]-(c*dt/dx)*.5*(usol[[i+1]]-usol[[i-1]]);SolMat[[t,i]]=usol[[i]];]];MatrixPlot[Transpose[SolMat],ColorFunction"BlueGreenYellow",ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2]

Out[]=

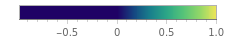

Benchmarking Solution Schemes

Benchmarking Solution Schemes

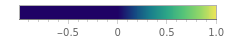

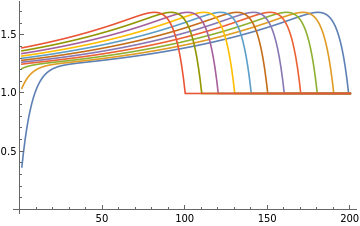

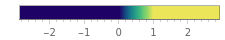

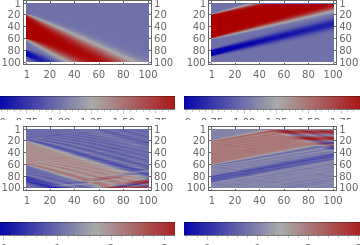

Since both BD, FD, and CD are (or are expected to) be able to approximate the solution with the sharp square wave ICs. We can now benchmark the spatiotemporal solutions obtained from both three methods and check the difference.

In[]:=

ClearAll["Global`*"];dx=0.02;xl=2;Numpoints=IntegerPart[xl/dx];xx=RecurrenceTable[{x[i]==x[i-1]+dx,x[1]==0},x,{i,1,100}];xsize=Dimensions[xx];dt=0.01;tfinal=1;usol=ConstantArray[1,xsize];numt=IntegerPart[tfinal/dt];SolMatbd=ConstantArray[1,{numt,100}];SolMatfd=ConstantArray[1,{numt,100}];SolMatcd1=ConstantArray[1,{numt,100}];SolMatcd2=ConstantArray[1,{numt,100}];n1=20;n2=60;n3=80;n4=90;(usol[[n1;;n2]])=ConstantArray[2,Dimensions[usol[[n1;;n2]]]];(usol[[n3;;n4]])=ConstantArray[0.5,Dimensions[usol[[n3;;n4]]]];usol1=usol;usol2=usol;usol3=usol;usol4=usol;

In[]:=

ListLinePlot[usol,FillingAutomatic]

Out[]=

Obtain the solutions from the four different schemes,(1) Backward difference with ; (2) Forward difference with ; (3) Central difference with ; Central difference with ; and compare them in graphics.

In[]:=

c=1;For[t=1,t≤100,t++,For[i=2,i≤100,i++,usol1[[i]]=usol1[[i]]-(c*dt/dx)*(usol1[[i]]-usol1[[i-1]]);SolMatbd[[t,i]]=usol1[[i]];]];

In[]:=

c=-1;For[t=1,t≤100,t++,For[i=2,i≤99,i++,usol2[[i]]=usol2[[i]]-(c*dt/dx)*(usol2[[i+1]]-usol2[[i]]);SolMatfd[[t,i]]=usol2[[i]];]];

In[]:=

c=1;For[t=1,t≤100,t++,For[i=2,i≤99,i++,usol3[[i]]=usol3[[i]]-(c*dt/dx)*.5*(usol3[[i+1]]-usol3[[i-1]]);SolMatcd1[[t,i]]=usol3[[i]];]];

In[]:=

c=-1;For[t=1,t≤100,t++,For[i=2,i≤99,i++,usol4[[i]]=usol4[[i]]-(c*dt/dx)*.5*(usol4[[i+1]]-usol4[[i-1]]);SolMatcd2[[t,i]]=usol4[[i]];]];

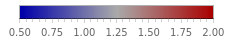

In[]:=

figbd=MatrixPlot[Transpose[SolMatbd],ColorFunction(Blend[{{Min[SolMatbd],Darker[Blue]},{(Min[SolMatbd]+Max[SolMatbd])/2,Darker[White]},{Max[SolMatbd],Darker[Red]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2];figfd=MatrixPlot[Transpose[SolMatfd],ColorFunction(Blend[{{Min[SolMatfd],Darker[Blue]},{(Min[SolMatfd]+Max[SolMatfd])/2,Darker[White]},{Max[SolMatfd],Darker[Red]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2];figcd1=MatrixPlot[Transpose[SolMatcd1],ColorFunction(Blend[{{Min[SolMatcd1],Darker[Blue]},{(Min[SolMatcd1]+Max[SolMatcd1])/2,Darker[White]},{Max[SolMatcd1],Darker[Red]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2];figcd2=MatrixPlot[Transpose[SolMatcd2],ColorFunction(Blend[{{Min[SolMatcd2],Darker[Blue]},{(Min[SolMatcd2]+Max[SolMatcd2])/2,Darker[White]},{Max[SolMatcd2],Darker[Red]}},#]&),ColorFunctionScalingFalse,PlotLegendsAutomatic,AspectRatio1/2];

In[]:=

GraphicsGrid[{{figbd,figfd},{figcd1,figcd2}}]

Out[]=

As expected, the CD schemes did not converge well with wavy behavior due to is a relatively large value.

In short, NDSolve is still very useful or mostly useful considering our employed simple finite difference schemes for a simple linear PDE does not converge to the solution operator just given different equation parameters with different discretization schemes. A few takeaways can be concluded:

A higher order or sharp ICs may lead to the unstable solution of NDSolve.

One should be very careful about the finite difference schemes considering different equation parameters.

In the case of LCE, backward and forward differences require positive and negative, respectively, to make the approximated solutions stable.

Central differences are generally not stable for LCE. However, one can still obtain a converged numerical solution when the parameter is close to zero.

NDSolve is generally more stable w.r.t. to different kinds of PDEs as we do not need much prior knowledge regarding the equations to be solved.

References

References