How might we make cats in cellular automata discoverable?

Philosophy & Strategy Track

How might we make cats in cellular automata discoverable?

Philosophy & Strategy Track

Philosophy & Strategy Track

Title after the children’s book Spot a cat by Lucy Micklethwait (Dorling Kindersley, 1995), which is designed to get young children engaged with fine art.

“Animals are good to think with.” —Claude Levi-Strauss

“You see, wire telegraph is a kind of a very, very long cat. You pull his tail in New York and his head is meowing in Los Angeles. Do you understand this? And radio operates exactly the same way: you send signals here, they receive them there. The only difference is that there is no cat.” —A quote apocryphally attributed to Albert Einstein

“You see, wire telegraph is a kind of a very, very long cat. You pull his tail in New York and his head is meowing in Los Angeles. Do you understand this? And radio operates exactly the same way: you send signals here, they receive them there. The only difference is that there is no cat.” —A quote apocryphally attributed to Albert Einstein

Author’s note: The code used in this notebook is not easily run on the web. We are considering how to get you a code-rich version of this post and hope to get to that soon. Meanwhile, enjoy.

GOAL OF THE PROJECT: Stephen Wolfram has tasked me with finding images of cats in cellular automata based on his essay, Generative AI Space and the Mental Imagery of Alien Minds, in which he explored the computational space around AI-generated images of cats in party hats.

How might we discover the cats in cellular automata (CA)? How might we identify representational images in CA by representing words and CA-generated images as vectors? How might we refine these representations?

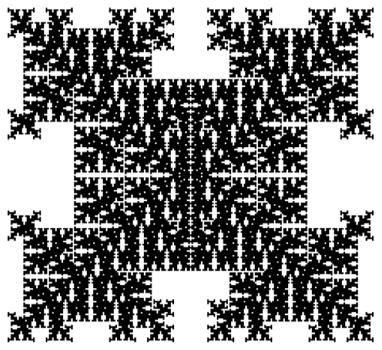

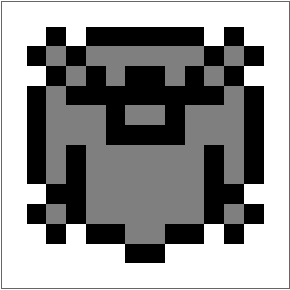

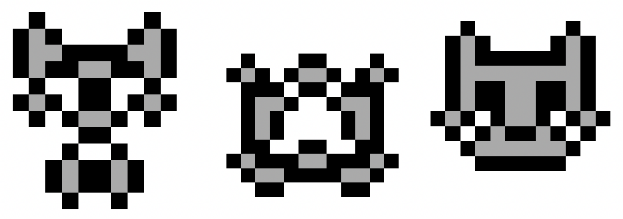

SUMMARY OF RESULTS: By applying a reflection to grids generated by 2D CA rules, we generate emoji-style representations of words, which we call Rorschachs in honor of the inkblot test, using vector representations of the images to find the best match for words. This results in representational CA images that both computers and humans can recognize. What we seem to get when we access CA cat space to make Rorschach cats is a rudimentary version of a search tool providing transparency to the CLIP & OpenCLIP Multi-Domain Feature Extractors. Delivering access to cat space seems to deliver a view into to the concept space. What we have is a toy model of a tool at low a resolution. The possibilities are very intriguing.

Details

Details

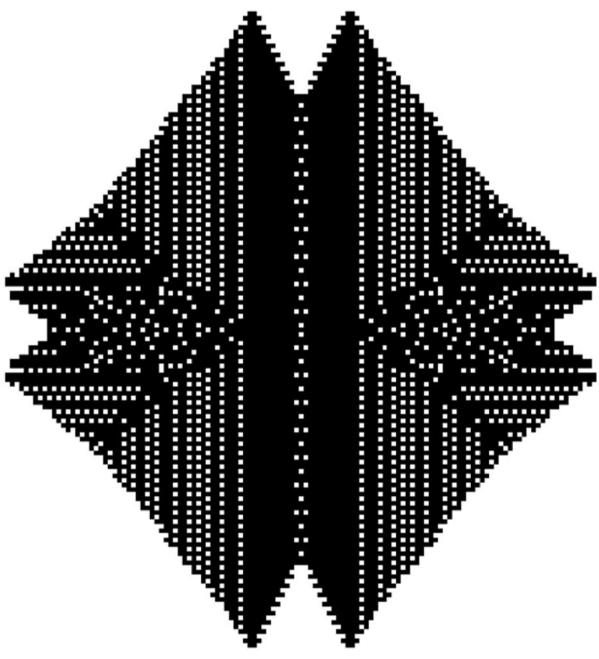

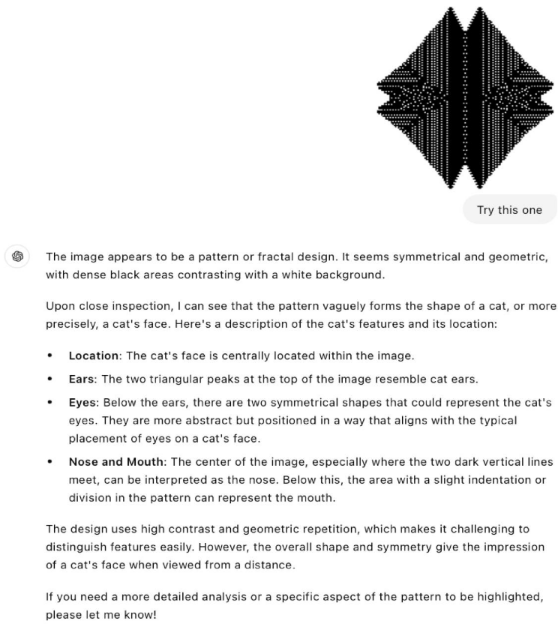

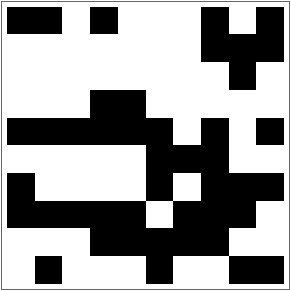

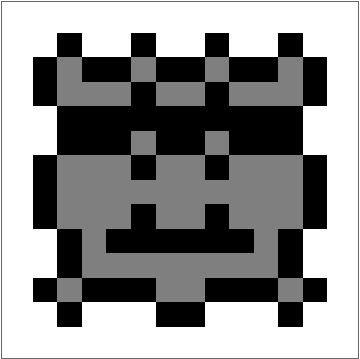

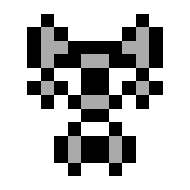

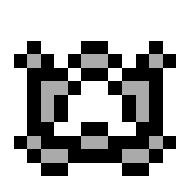

Are there cats in Cellular Automata? There are no cats in CAs. But just as there is a face on Mars and the Virgin Mary can appear on a piece of toast, we can see cats in cellular automata, and its not very hard to find CA that look cattish. Here is one we call Darth Kitty:

The task at hand, to find cats in CA algorithmically, should be understood as a positive use of machine hallucination (The initial computer science mention of the phrase had to do with getting machines to see faces: Baker, S., & Kanade, T. (2000). Hallucinating faces. Proceedings Fourth IEEE International Conference on Automatic Face and Gesture Recognition (Cat. No. PR00580), 83-88. https://doi.org/10.1109/AFGR.2000.840616 )

How do we see cats? How do computers see cats? Humans and computers do not see eye-to-eye on what a cat looks like. In the CA image above, Darth Kitty, I see cat-like ears, and if you turn it sideways it’s got an evil face. The computer doesn’t think so. The computer failed to see cats in CA that we could see and I disagreed with computer rankings of images on cattishness.

A preliminary idea for how this might be accomplished, furnished by Stephen Wolfram, is to look at CA images with CLIP and using evolutionary strategies attempt to introduce mutations to the CA rules that would improve rules’ cat-fitness, the idea being that if you run this long enough, eventually it might converge to something resembling, at least in CLIP’s judgement, a cat.

We explored using CLIP to identify cats in images and ran into some problems. CLIP is very sensitive to image resizing, sometimes doesn’t see animals that we see in CA patterns because of overemphasis on outlines, and is sensitive to the placement of the target image. It is not good at the image gestalt. We needed a way to force consensus.

In considering how to go about this, we did some preliminary exploration. A key question is “What does a cat look like to an AI?” We humans have trained on different cat data than AIs, so our assumptions of what an AI might think is catlike are not necessarily correct. This turns out to be a more complex question than I had initially thought, since the essence of catness we want detected will be in the form of cellular automata plots which do not, in general, look like cats.

A preliminary idea for how this might be accomplished, furnished by Stephen Wolfram, is to look at CA images with CLIP and using evolutionary strategies attempt to introduce mutations to the CA rules that would improve rules’ cat-fitness, the idea being that if you run this long enough, eventually it might converge to something resembling, at least in CLIP’s judgement, a cat.

We explored using CLIP to identify cats in images and ran into some problems. CLIP is very sensitive to image resizing, sometimes doesn’t see animals that we see in CA patterns because of overemphasis on outlines, and is sensitive to the placement of the target image. It is not good at the image gestalt. We needed a way to force consensus.

In considering how to go about this, we did some preliminary exploration. A key question is “What does a cat look like to an AI?” We humans have trained on different cat data than AIs, so our assumptions of what an AI might think is catlike are not necessarily correct. This turns out to be a more complex question than I had initially thought, since the essence of catness we want detected will be in the form of cellular automata plots which do not, in general, look like cats.

Minimal cat faces: the center of catishness

Minimal cat faces: the center of catishness

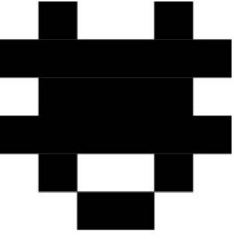

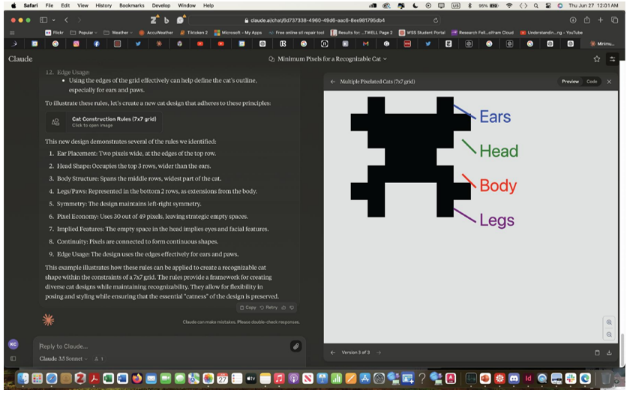

For this one, Claude attempts to describe a minimally cat body.

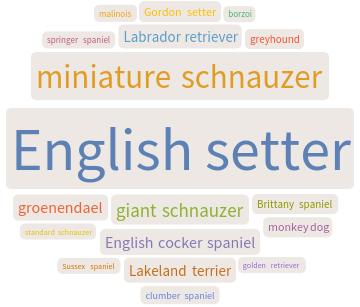

Outliers: The Blind Man & the Elephant problem I built a pet detector to see what animals ImageIdentify[] thinks might be in CA. This generates two companion landscapes: the relative (low) probability that this CA is an animal; if it were an animal, which animal would it be?

Outliers: The Blind Man & the Elephant problem I built a pet detector to see what animals ImageIdentify[] thinks might be in CA. This generates two companion landscapes: the relative (low) probability that this CA is an animal; if it were an animal, which animal would it be?

In[]:=

pic=Import["/Users/kathryncramer/Desktop/1-6-105.png"]

Out[]=

In[]:=

data=ImageIdentify[pic,Entity["Word","domestic animal"],18,"Probability"]WordCloud[data]

Out[]=

0.0496457,0.0282423,0.0164433,0.0141074,0.0138909,0.0135454,0.0130683,0.0108018,0.01065,0.0103034,0.0096566,0.00907027,0.00854387,0.00836093,0.00803061,0.00784764,0.00764759,0.00757324

Out[]=

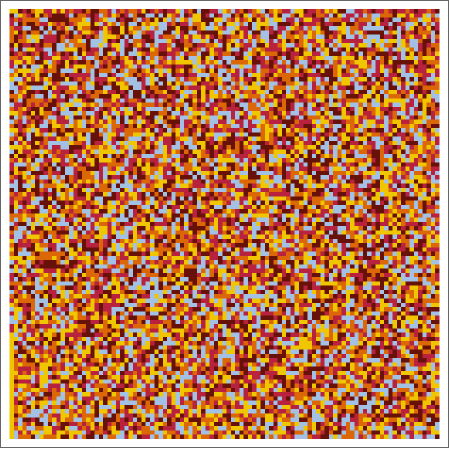

ImageIdentify says the most likely pet in this CA image is an English Setter. From Wikipedia, we can get an image of an English Setter, which looks like this:It’s picking up on the coat coloration.The problem of Corn For very noisy CA, the identifiers are very sensitive to color (and also to outlines)

In[]:=

test=Import["/Users/kathryncramer/Desktop/test CA.png"]

Out[]=

In[]:=

data=ImageIdentify[test,All,18,"Probability"]WordCloud[data]

Out[]=

0.678848,0.678848,0.678848,0.693234,0.701889,0.701907,0.702708,0.702715,0.733252,0.00279813,0.00210918,0.00136542,0.00106162,0.0742235,0.0104904,0.00489222,0.00261387,0.00138032

Out[]=

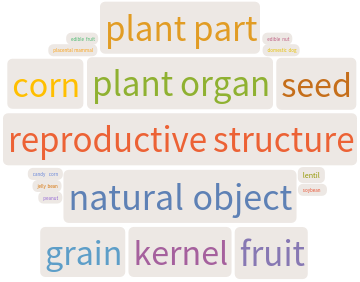

Multi-Modal LLMs as Image Identifiers GPT-4o as an image identifier is much better at the gestalt of images as we see them than other types of image identification.

Rorschach Cats: The Cat Vision Cheat Code

Rorschach Cats: The Cat Vision Cheat Code

What we get from binding CLIP to CA to look for cats

What we get from binding CLIP to CA to look for cats

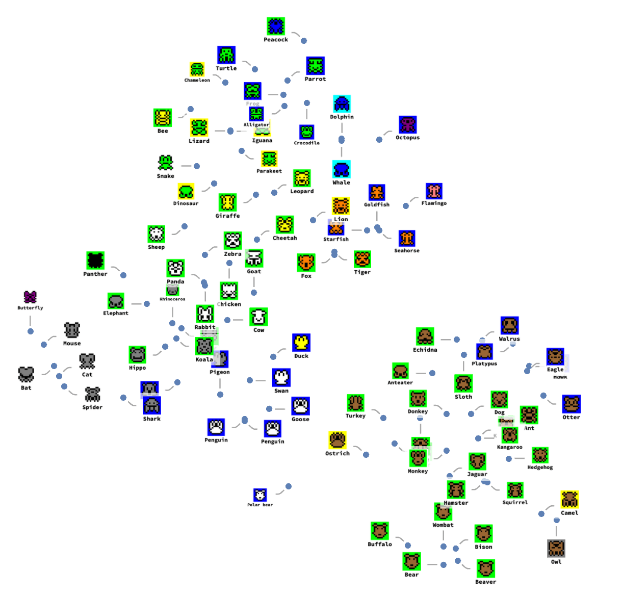

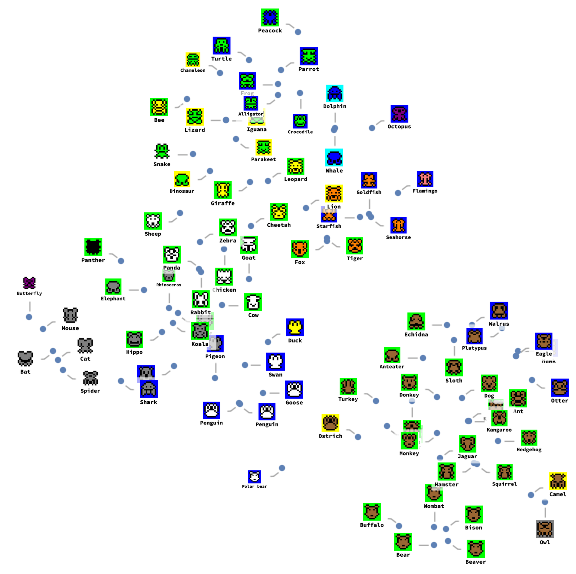

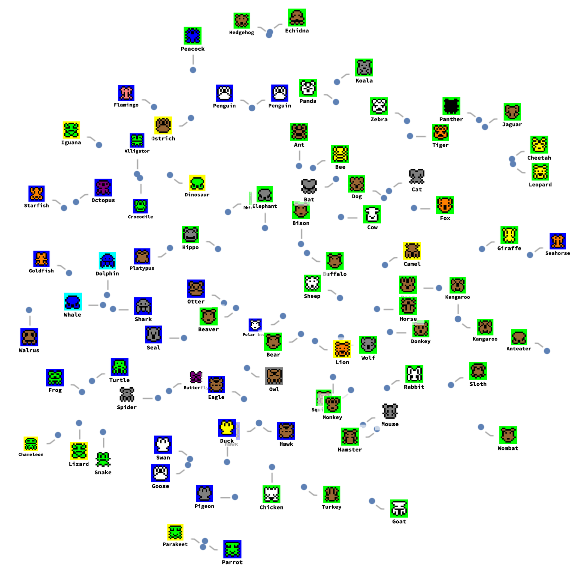

We can use FeatureSpacePlot to see how our Rorschach animals are conceptually and visually related to one another in the resulting space.

It seems that if we can build a thing that can find a cat in a cellular automata space, it can also find Jesus, and anything else in its down-sampled conceptual space. Except, to paraphrase the apocryphal Einstein quote, there is no cat.

2D CAs Rules Definitions

2D CAs Rules Definitions

Growth-Decay Cases Rules and Outer Totalistic Code Rules Map the same space of 2D rules

Growth-Decay Cases Rules and Outer Totalistic Code Rules Map the same space of 2D rules

◼

In 2D there are Outer Totalistic Code rules in total.

262144

◼

An example of equivalent rules using both notations:

In[]:=

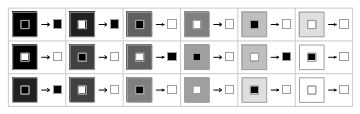

RulePlot[CellularAutomaton[<|"OuterTotalisticCode"->262143,"Dimension"->2|>]]

Out[]=

In[]:=

RulePlot[CellularAutomaton[<|"GrowthDecayCases"->{{0,1,2,3,4,5,6,7,8},{}},"Dimension"->2|>]]

Out[]=

Evolve a 2D CA for several generations

Evolve a 2D CA for several generations

◼

Set the initial conditions for a grid of cells:

In[]:=

SeedRandom[1];initialConditions=RandomInteger[1,{10,10}]

Out[]=

{{1,1,0,1,0,0,0,1,0,1},{0,0,0,0,0,0,0,1,1,1},{0,0,0,0,0,0,0,0,1,0},{0,0,0,1,1,0,0,0,0,0},{1,1,1,1,1,1,0,1,0,1},{0,0,0,0,0,1,1,1,0,0},{1,0,0,0,0,1,0,1,1,1},{1,1,1,1,1,0,1,1,1,0},{0,0,0,1,1,1,1,1,0,0},{0,1,0,0,0,1,0,0,1,1}}

In[]:=

ArrayPlot[initialConditions]

Out[]=

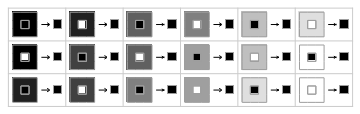

Some CAs reach stable configurations after some generations

Some CAs reach stable configurations after some generations

◼

For example the following CA reaches a stable fix configuration after 16 generations:

In[]:=

Map[ArrayPlot,CellularAutomaton[<|"Dimension"->2,"GrowthDecayCases"->{{2,3},{5}}|>,initialConditions,17]]

Out[]=

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

Some other CAs have periodic oscillations after some generations

Some other CAs have periodic oscillations after some generations

Adding Bi-lateral Symmetry, an Outline and a Padding to Grids

Adding Bi-lateral Symmetry, an Outline and a Padding to Grids

In[]:=

gridTransform[grid_List]:= Module[ {rotatedArray, reflectedArray, assembledArray, paddedArray, replaceZerosWithTwos, finalArray}, (* Reflect the array to the left *) reflectedArray = Reverse[grid, 2]; (* Assemble them together horizontally *) assembledArray = Join[grid, reflectedArray, 2]; (* Add a padding of 0s around the border *) paddedArray = ArrayPad[assembledArray, 1, 0]; (* Function to replace zeros (that are in contact with ones) with twos in order to add an outline *) replaceZerosWithTwos[arr_] := Module[{result = arr, dims = Dimensions[arr]}, Do[ If[arr[[i, j]] == 0 && (If[i > 1, arr[[i - 1, j]] == 1, False] || If[i < dims[[1]], arr[[i + 1, j]] == 1, False] || If[j > 1, arr[[i, j - 1]] == 1, False] || If[j < dims[[2]], arr[[i, j + 1]] == 1, False]), result[[i, j]] = 2 ], {i, 1, dims[[1]]}, {j, 1, dims[[2]]} ]; result ]; (* Apply the replacement *) finalArray = ArrayPad[replaceZerosWithTwos[paddedArray], 1, 0] ]

Create Rorschach Blots with 10x5 grids to obtain representations with bi-lateral symmetry.

Create Rorschach Blots with 10x5 grids to obtain representations with bi-lateral symmetry.

In[]:=

SeedRandom[666];initialConditions=RandomInteger[1,{10,5}]

Out[]=

{{1,0,1,1,1},{0,1,0,1,0},{1,0,0,0,0},{1,1,1,0,1},{1,1,1,0,0},{1,0,1,1,1},{1,0,1,1,1},{0,0,1,1,1},{1,0,1,1,1},{0,0,0,0,1}}

In[]:=

ArrayPlot@gridTransform[initialConditions]

Out[]=

Apply 2D CA rules to the initial grid and let it evolve for multiple generations

Apply 2D CA rules to the initial grid and let it evolve for multiple generations

In[]:=

SeedRandom[1];initialConditions=RandomInteger[1,{10,5}]

Out[]=

{{1,1,0,1,0},{0,0,1,0,1},{0,0,0,0,0},{0,0,1,1,1},{0,0,0,0,0},{0,0,0,1,0},{0,0,0,1,1},{0,0,0,0,0},{1,1,1,1,1},{1,0,1,0,1}}

In[]:=

ListAnimate@Map[ArrayPlot[gridTransform[#]]&,CellularAutomaton[<|"Dimension"->2,"GrowthDecayCases"->{{2,3},{1,5}}|>,initialConditions,18]]

Out[]=

In[]:=

SeedRandom[666];initialConditions=RandomInteger[1,{10,5}]

Out[]=

{{1,0,1,1,1},{0,1,0,1,0},{1,0,0,0,0},{1,1,1,0,1},{1,1,1,0,0},{1,0,1,1,1},{1,0,1,1,1},{0,0,1,1,1},{1,0,1,1,1},{0,0,0,0,1}}

In[]:=

ListAnimate@Map[ArrayPlot[gridTransform[#]]&,CellularAutomaton[<|"Dimension"->2,"GrowthDecayCases"->{{2,3},{1,5}}|>,initialConditions,18]]

Out[]=

Using a Multi-domain Feature Extractor Neural Network to measure similarity between generated images and given entities

Using a Multi-domain Feature Extractor Neural Network to measure similarity between generated images and given entities

In[]:=

NetModel["OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data"]

Out[]=

NetChain

◼

Generate the images from the CA grids:

In[]:=

images=Map[Image[ReplaceAll[gridTransform[#],{0->White,1->Lighter@Gray,2->Black}]]&,CellularAutomaton[<|"Dimension"->2,"GrowthDecayCases"->{{2,3},{1,5}}|>,initialConditions,15]]

Out[]=

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

◼

Obtain the feature vectors corresponding to generated grid images:

In[]:=

images10by10InitialGridsFeatures=NetModel[{"OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data","InputDomain"->"Image"}][images];

◼

Create the feature vector corresponding the concept of “pixel art cat”:

In[]:=

textFeatures=NetModel[{"OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data","InputDomain"->"Text"}]["pixel art cat"];

In[]:=

SortBy[Thread[{images,First@DistanceMatrix[{textFeatures},images10by10InitialGridsFeatures,DistanceFunction->CosineDistance]}],Last]

Out[]=

,0.712569,

,0.713973,

,0.753807,

,0.753807,

,0.754582,

,0.764333,

,0.766356,

,0.770558,

,0.771349,

,0.772441,

,0.77272,

,0.774767,

,0.779863,

,0.781741,

,0.789378,

,0.803805

◼

The image with the smallest distance is the one that it’s closest to the concept “pixel art cat” in terms of OpenCLIP representational space.

◼

In this example we generated only 15 different images starting from a single initial grid.

Finding Good “Cat” 2D CAs Rules from a subset of 25k+ Outer Totalistic Code Rules

Finding Good “Cat” 2D CAs Rules from a subset of 25k+ Outer Totalistic Code Rules

Let’s try to identify “good” 2D CAs Rules for generating cats. Here we will explore 25k+ Outer Totalistic Code Rules, which is around 10% of all possible rules in 2D (262144 rules).

In[]:=

(* Define the function generate a 2D CA using an Outer Totalistic Code Rules *)ClearAll[generate2DCAOuterTotalisticCode];generate2DCAOuterTotalisticCode[initialConditions_, outerTotalisticCode_Integer, generations_] := Module[ {init, rules, caArray}, (* Initialize the grid with the provided initial conditions *) init = initialConditions; (* Define the cellular automaton with the specified rules *) rules = <|"Dimension" -> 2, "OuterTotalisticCode" -> outerTotalisticCode|>; (* Apply the cellular automaton rules for the specified generations *) caArray = Last@CellularAutomaton[rules, init, generations]; (* Transform the grid and add Styling *) gridTransform[caArray] ]

In[]:=

ArrayPlot@generate2DCAOuterTotalisticCode[initialConditions,4321,5]

Out[]=

◼

Create 100k initial random 10x5 grids:

In[]:=

initialConditionsList=Table[SeedRandom[i];RandomInteger[1,{10,5}],{i,100000}];

◼

Check whether all initial 10x5 grids are unique:

In[]:=

Length@Union[initialConditionsList]

Out[]=

100000

Define a function to take 25 random initial grids, evolve them with a specific rule and number of generation and then measure the distance to a certain “entity/concept” like “cat”:

In[]:=

subsetInits = Take[initialConditionsList, 25];entityMeanDistance[code_Integer, generations_Integer, entity_String] := Module[{caGrids, caImages, caImagesFeatures, textFeatures}, caGrids = Map[generate2DCAOuterTotalisticCode[#, code, generations] &, subsetInits]; caImages = Map[Image[ReplaceAll[#, {0 -> White, 1 -> Lighter@Gray, 2 -> Black}]]&, caGrids]; caImagesFeatures = NetModel[{"OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data", "InputDomain" -> "Image"}][caImages]; textFeatures = NetModel[{"OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data", "InputDomain" -> "Text"}][entity]; Mean@First@DistanceMatrix[{textFeatures}, caImagesFeatures, DistanceFunction -> CosineDistance]]

◼

Calculate the mean distance of the 25 random initial grids without evolving them (generations=0) to the concept “cat”:

In[]:=

entityMeanDistance[44,0,"cat"]

Out[]=

0.773689

◼

Create 25k+ outer totalistic code rules:

In[]:=

randomCodes=Table[SeedRandom[i];RandomInteger[{0,262143}],{i,27000}];

In[]:=

Length@Union@randomCodes

Out[]=

25692

◼

Calculate the mean distances to the concept “cat” of 25k+ 2D CA evolved for 5 generations under the above random code rules

meanDistancesPixelCat=Map[entityMeanDistance[#,5,"cat"]&,randomCodes];

◼

Display the results:

In[]:=

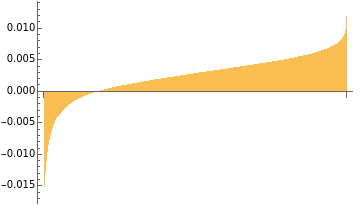

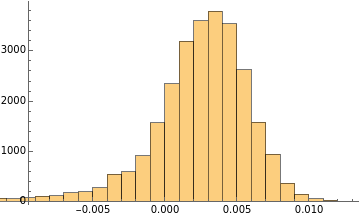

BarChart[Sort[(0.773688735101492`-meanDistancesPixelCat)]]

Out[]=

In[]:=

Histogram[Sort[(0.773688735101492`-meanDistancesPixelCat)]]

Out[]=

We can see that under 5 generations, for 25 randomly selected initial grids, the majority of 2D CAs yield a shorter mean distance to the concept “cat”. There are also a lot of rules that destroy the “catness” of the initial random grids in a stronger way (left tails of the graphs).

◼

Explore the “best” cat rule:

We can use Min to obtain it.

In[]:=

Min[meanDistancesPixelCat]

Out[]=

0.761027

And position to find the corresponding rule code:

In[]:=

Position[meanDistancesPixelCat,Min[meanDistancesPixelCat]]

Out[]=

{{6467}}

In[]:=

randomCodes[[6467]]

Out[]=

181264

In[]:=

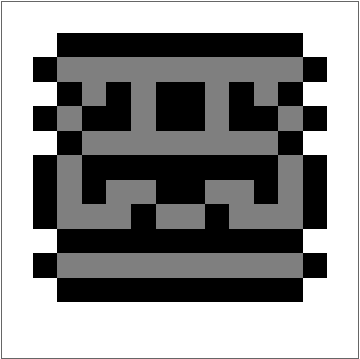

RulePlot[CellularAutomaton[<|"OuterTotalisticCode"->181264,"Dimension"->2|>]]

Out[]=

Generate 100k images with the found “cat” rule

Generate 100k images with the found “cat” rule

In[]:=

generations=5;

caGridsCats=Map[generate2DCAOuterTotalisticCode[#,181264,generations]&,initialConditionsList];caImagesCats=Map[Image[ReplaceAll[#,{0->White,1->Lighter@Gray,2->Black}]]&,caGridsCats];

◼

Note that the final generated images contain 733 duplicated images (there is degeneracy):

In[]:=

Length@Union@caGridsCats

Out[]=

99277

◼

These are the first 25 images:

In[]:=

caImagesCats[[;;25]]

Out[]=

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

◼

Now let’s use OpenCLIP to obtain the generated images closer to the concept “cat” (Note that computing the image features can take up to several hours, to avoid re-computing import the attached file at the bottom of the post)

In[]:=

caImagesFeaturesCats=NetModel[{"OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data","InputDomain"->"Image"}][caImagesCats];

In[]:=

textFeatures=NetModel[{"OpenCLIP Multi-domain Feature Extractor Trained on LAION-2B Data","InputDomain"->"Text"}]["cat"];Grid@Partition[Take[SortBy[Thread[{caImagesCats,First@DistanceMatrix[{textFeatures},caImagesFeaturesCats,DistanceFunction->CosineDistance]}],Last],50][[All,1]],25]

Out[]=

◼

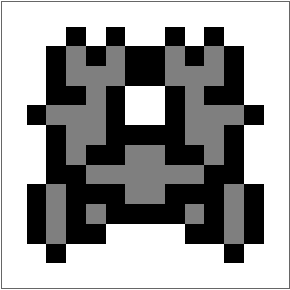

Some of them can be certainly regarded as cats, at least in “pixel art terms”.

Animals Semantic Space Plot

Animals Semantic Space Plot

In[]:=

animals={"Dog","Cat","Rabbit","Mouse","Lion","Elephant","Giraffe","Zebra","Monkey","Kangaroo","Koala","Sloth","Leopard","Cheetah","Crocodile","Alligator","Shark","Whale","Dolphin","Penguin","Seal","Chicken","Goat","Sheep","Cow","Horse","Donkey","Turkey","Duck","Goose","Parrot","Eagle","Hawk","Pigeon","Hawk","Owl","Fox","Wolf","Bear","Deer","Squirrel","Hamster","Hedgehog","Goldfish","Turtle","Frog","Snake","Lizard","Iguana","Butterfly","Bee","Ant","Spider","Octopus","Seahorse","Starfish","Panda","Hippo","Camel","Peacock","Flamingo","Otter","Bat","Beaver","Walrus","Polar bear","Penguin","Ostrich","Platypus","Dinosaur","Rhinoceros","Parakeet","Chameleon","Buffalo","Bison","Tiger","Jaguar","Panther","Anteater","Echidna","Kangaroo","Wombat","Swan"};

In[]:=

initialConditionsList=Import["/Users/jofree/Documents/Wolfram/WSS24/Students/Kathryn/initialConditions.wl"];

In[]:=

Union[initialConditionsList]//Length

Out[]=

49999

Set Growth-Decay Cases Rules

Set Growth-Decay Cases Rules

In[]:=

(*Survivalrules:livecellssurvivewith1,2or3neighbors*)survivalRules={1,2,3};(*Growthrules:livecellsbecomedeadwith1neighbor*)growthRules={1};

Set number of generations

Set number of generations

Typically evolving these CA for 5 generations is enough to reach a final fix state

In[]:=

generations=5;

In[]:=

(* Define the function *)generate2DCA[initialConditions_, survivalRules_, growthRules_, generations_] := Module[ {init, rules, caArray}, (* Initialize the grid with the provided initial conditions *) init = initialConditions; (* Define the cellular automaton with the specified rules *) rules = <|"Dimension" -> 2, "GrowthDecayCases" -> {survivalRules, growthRules}|>; (* Apply the cellular automaton rules for the specified generations *) caArray = Last@CellularAutomaton[rules, init, generations]; (* Transform the grid and add Styling *) gridTransform[Transpose[caArray]] ]

In[]:=

caList=Map[generate2DCA[#,survivalRules,growthRules,generations]&,initialConditionsList];

In[]:=

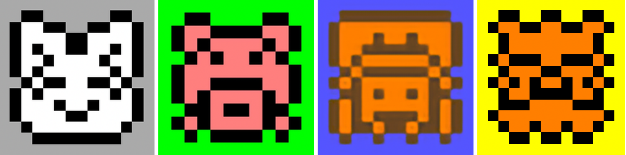

(*CreatetheimagewiththespecifiedRGBcolors*)images=Map[Image[ReplaceAll[#,{0->Lighter@Blue,1->Orange,2->Darker@Brown}],ColorSpace->"RGB"]&,caList];

In[]:=

RandomSample[images,4]

Out[]=

,

,

,

◼

The computation of the features might take several hours (import the attached file instead):

imgFeatures = NetModel[{"CLIP Multi-domain Feature Extractor", "InputDomain" -> "Image"}][images];

In[]:=

imgFeatures=Import["/Users/jofree/Documents/Wolfram/WSS24/Students/Kathryn/imgFeatures2.wl"];

In[]:=

textFeatures=NetModel[{"CLIP Multi-domain Feature Extractor","InputDomain"->"Text"}]["bee"];Grid@Partition[Take[SortBy[Thread[{images,First@DistanceMatrix[{textFeatures},imgFeatures,DistanceFunction->CosineDistance]}],Last],50][[All,1]],25]

Out[]=

Asking LLM for colors

Asking LLM for colors

◼

Define an LLMFunction to get main and background colors for a given entity:

In[]:=

getColors=LLMFunction[StringTemplate["Give me the main color for a pixel art `entity` and its main background color as a list of colors in Wolfram Language from the following list: {Red,Green,Blue,Yellow,Orange,Purple,Pink,Cyan,Magenta,Brown,White,Gray}\nAnswer only with the list {color1,color2} and nothing else (Note that the outline is Black)."],LLMEvaluator-><|"Model"->{"OpenAI","gpt-4o"},"Temperature"->0|>]

Out[]=

LLMFunction

In[]:=

ToExpression@getColors[<|"entity"->"pig"|>]

Out[]=

,

In[]:=

generateImage[{color1_,color2_},ca_]:=Image[ReplaceAll[ca,{1->color1,2->Black,0->color2}]]

In[]:=

ClearAll[getCAImage]

In[]:=

getCAImage[entity_String]:=With[{colors=ToExpression@getColors[<|"entity"->entity|>],textFeatures=NetModel[{"CLIP Multi-domain Feature Extractor","InputDomain"->"Text"}]["pixel art "<>entity]},ImageResize[generateImage[colors,Take[SortBy[Thread[{caList,First@DistanceMatrix[{textFeatures},imgFeatures,DistanceFunction->CosineDistance]}],Last],1][[1,1]]],50]]

In[]:=

getCAImage["tiger"]

Out[]=

In[]:=

getCAImage["bee"]

Out[]=

In[]:=

getCAImage["lion"]

Out[]=

In[]:=

getCAImage["Dog"]

Out[]=

In[]:=

animalImages=Map[getCAImage[#]&,animals]

Out[]=

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

,

In[]:=

labels=MapThread[Rasterize[Labeled[#1,Style[#2,Bold,FontSize->16]]]&,{animalImages,animals}]

In[]:=

dataImages=Thread[animalImages->labels]

In[]:=

FeatureSpacePlot[dataImages,FeatureExtractor->NetModel[{"CLIP Multi-domain Feature Extractor","InputDomain"->"Image"}],LabelingFunction->Callout,ImageSize->Large]

Out[]=

In[]:=

dataText=Thread[animals->labels]

In[]:=

FeatureSpacePlot[dataText,FeatureExtractor->NetModel[{"CLIP Multi-domain Feature Extractor","InputDomain"->"Text"}],LabelingFunction->Callout,ImageSize->Large]

Out[]=

Getting embeddings for CAs and Concepts:

Getting embeddings for CAs and Concepts:

In[]:=

animalImagesEmbeddings=DimensionReduce[animalImages,FeatureExtractor->NetModel[{"CLIP Multi-domain Feature Extractor","InputDomain"->"Image"}],Method->"PrincipalComponentsAnalysis"];

In[]:=

animalTextEmbeddings=DimensionReduce[animals,FeatureExtractor->NetModel[{"CLIP Multi-domain Feature Extractor","InputDomain"->"Text"}],Method->"PrincipalComponentsAnalysis"];

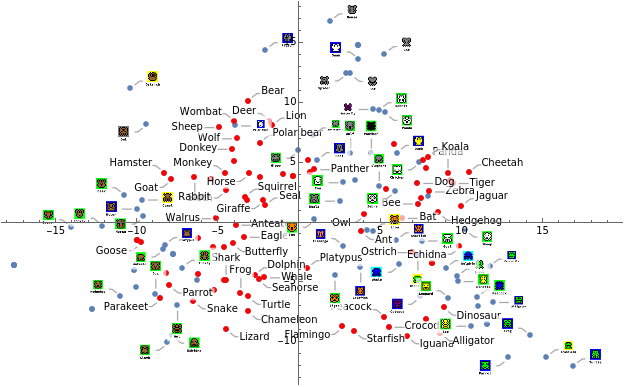

Visualising these embeddings using dimensionality reduction:

Visualising these embeddings using dimensionality reduction:

In[]:=

ListPlot[Join[Thread[Callout[animalImagesEmbeddings,labels]],Thread[Style[Thread[Callout[animalTextEmbeddings,animals]],Red]]],ImageSize->900]

Out[]=

ROADS NOT TAKEN: I considered what kinds of cellular automata might be able to deliver full cat faces and looked into the matter of CA systems such as neural cellular automata and lenia (on the advice of UVM’s Josh Bongard), but continuous cellular automata are outside the scope of what I could work with in the space of a few weeks.

FURTHER WORK

FURTHER WORK

◼

Higher resolution image processing and image generation

◼

More techniques for using feature extractors to generate representational images

◼

The potential for using the linkage of CA and multi-domain feature extractors such as CLIP as a search tool

◼

Exploring the computational implications of relationships between concepts defined by cellular automata.

◼

We did explore Wolfram’s concept of using evolutionary algorithms to improve cattishness. It seemed, at the resolution we were working, to make only a small improvement but might work better at higher resolutions. Here are a few more highly evolved Rorschach cats:

Many thanks to Jofre and Phinéas who did an amazing job of supporting and guiding me through this exploration and coming up with concepts for how to make it work, and to Stephen Wolfram who made that off-the-wall suggestion that I go look for cats in CA. —KC

References

References

◼

Baker, S., & Kanade, T. (2000). Hallucinating faces. Proceedings Fourth IEEE International Conference on Automatic Face and Gesture Recognition (Cat. No. PR00580), 83–88. https://doi.org/10.1109/AFGR.2000.840616

◼

Cramer, K. (2022, July 20). Automated Design Fiction: A Method for Inferring Hidden Mechanics of GPT-3. International Conference on Computation in Social Science., Chicago, IL. https://drive.google.com/file/d/1DqyvURcQOpaeCVX7ZDV-KUFicOhiUQTH/view?usp=sharing

◼

Micklethwait, L. (1995). Spot a cat (1st American ed). Dorling Kindersley.

◼

Olah, C., Satyanarayan, A., Johnson, I., Carter, S., Schubert, L., Ye, K., & Mordvintsev, A. (2018). The Building Blocks of Interpretability. Distill, 3(3), 10.23915/distill.00010. https://doi.org/10.23915/distill.00010

◼

Shane, J. (2020). Trained a neural net on my cat and regret everything. AI Weirdness. https://www.aiweirdness.com/trained-a-neural-net-on-my-cat-and-20-04-17/

◼

Sohnikov, D. (2022). How Neural Network Sees a Cat. Personal Homepage of Dmitri Shnikov. https://soshnikov.com/education/how-neural-network-sees-a-cat/

◼

Wolfram, S. (2002). A New Kind of Science. Wolfram Media. https://www.wolframscience.com

◼

Wolfram, S. (2023, July 17). Generative AI Space and the Mental Imagery of Alien Minds. Stephen Wolfram Writings. https://writings.stephenwolfram.com/2023/07/generative-ai-space-and-the-mental-imagery-of-alien-minds/

◼

Wolfram, S. (2024, May 3). Why Does Biological Evolution Work? A Minimal Model for Biological Evolution and Other Adaptive Processes. Stephen Wolfram Writings. https://writings.stephenwolfram.com/2024/05/why-does-biological-evolution-work-a-minimal-model-for-biological-evolution-and-other-adaptive-processes/

◼

Yurkth. (2021). Sprator [Computer software]. https://github.com/yurkth/sprator

◼

Zimmerman, J. W., Hudon, D., Cramer, K., Onge, J. S., Fudolig, M., Trujillo, M. Z., Danforth, C. M., & Dodds, P. S. (2023). A blind spot for large language models: Supradiegetic linguistic information (arXiv:2306.06794). arXiv. http://arxiv.org/abs/2306.06794. There’s also a later version of our paper at Plutonics: Zimmerman, J. W., Hudon, D., Cramer, K., Onge, J. S., Fudolig, M., Trujillo, M. Z., Danforth, C. M., & Dodds, P. S. (2024). A blind spot for large language models: Supradiegetic linguistic information. Plutonics: A Journal of Non-Standard Theory, XVII. https://plutonicsjournal.com/wp-content/uploads/2024/05/Plutonics17spread.pdf

CITE THIS NOTEBOOK

CITE THIS NOTEBOOK

Spot a cat: cellular automata edition, or representational images in cellular automata

by Kathryn Cramer

Wolfram Community, STAFF PICKS, July 10, 2024

https://community.wolfram.com/groups/-/m/t/3207689

by Kathryn Cramer

Wolfram Community, STAFF PICKS, July 10, 2024

https://community.wolfram.com/groups/-/m/t/3207689