Abstract

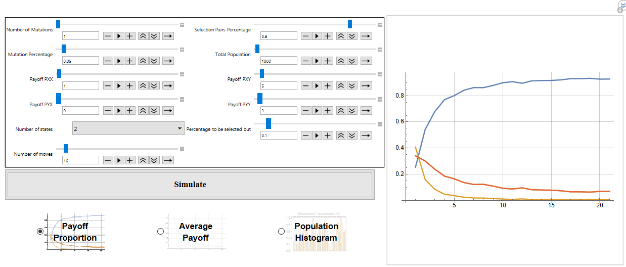

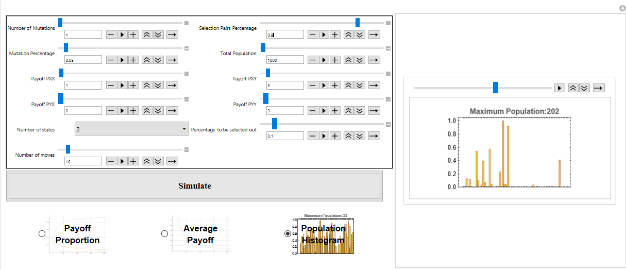

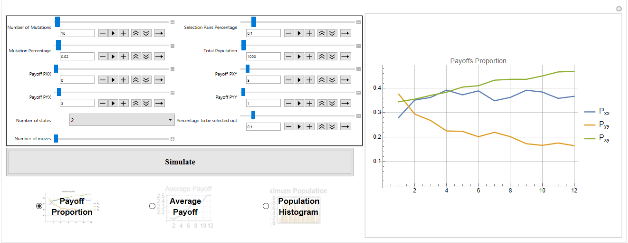

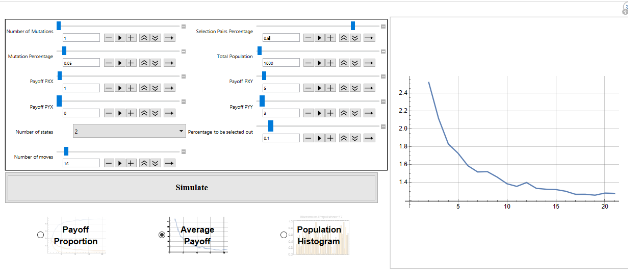

While evolutionary game theory has focused on tractable solutions with explicit dynamics imposed on a population, many interesting dynamics emerge in the simulation of even simple games. In particular, games in which there are only mixed strategy equilibria, or no ESS, still have interesting evolutionary dynamics - including the evolution of cheap-talk signalling, punctuated evolution and extinction events even in the absence of any exogenous factors. This project seeks to consolidate and extend materials to allow analysis of symmetric repeated evolutionary games played by deterministic finite automata.

While evolutionary game theory has focused on tractable solutions with explicit dynamics imposed on a population, many interesting dynamics emerge in the simulation of even simple games. In particular, games in which there are only mixed strategy equilibria, or no ESS, still have interesting evolutionary dynamics - including the evolution of cheap-talk signalling, punctuated evolution and extinction events even in the absence of any exogenous factors. This project seeks to consolidate and extend materials to allow analysis of symmetric repeated evolutionary games played by deterministic finite automata.