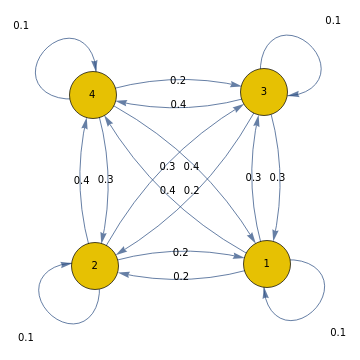

(*Markovtransitionkernelfrom{1,2,3,4}to{1,2,3,4}asamatrixandgraph*)t={{0.1,0.2,0.3,0.4},{0.2,0.1,0.3,0.4},{0.3,0.2,0.1,0.4},{0.4,0.3,0.2,0.1}};MatrixForm[t]

Out[]//MatrixForm=

0.1 | 0.2 | 0.3 | 0.4 |

0.2 | 0.1 | 0.3 | 0.4 |

0.3 | 0.2 | 0.1 | 0.4 |

0.4 | 0.3 | 0.2 | 0.1 |

graph=Graph[DiscreteMarkovProcess[1,t]];Scan[(PropertyValue[{graph,#},EdgeLabels]=PropertyValue[{graph,#},"Probability"])&,EdgeList[graph]];graph

Out[]=

We explore a concept of entropy which assigns a quantity to each application of an abstract rewriting rule, or more precisely to each morphism in a symmetric monoidal category endowed with the structure of a Markov category (Fritz, 2020; Perrone, 2023) whose instances are prevalent in abstract rewriting systems and the Wolfram Physics Project. While structures in the Wolfram Physics Project are often viewed as dynamic systems whose dynamics are described by abstract rewriting rules (such as subobject substitution in strings and hypergraphs), the dynamics can be thought of as non-unique in the sense that there are often many potential rules to apply and so multiway systems are used to model the composition of all applicable rules at all states stemming from an initial state (or from all states, e. g. in the case of the Ruliad); many of the hypergraph rewriting systems relevant to physics exhibit a property known as causal invariance which allows one to effectively reason about non-unique causation (of states which branch due to different rules but often serendipitously merge). The view expressed is that many abstract rewriting systems, particularly their multiway systems, can also be viewed as stochastic systems akin to systems of Markov kernels like the one shown above.

We will see that not all rewriting rules are "built the same"; when equipped with a divergence that provides a notion of mutual information, some rules are deterministic in that their entropy is zero whereas other rules are not deterministic in the sense that their entropy is not zero. Roughly, classical systems are those stochastic systems in which every rule application is deterministic and the rest will display behavior which can be truly called stochastic. After making this precise with the formalism of Markov categories, we explore a couple of concrete examples and sketch their potential relevance to the property of causal invariance.

We will see that not all rewriting rules are "built the same"; when equipped with a divergence that provides a notion of mutual information, some rules are deterministic in that their entropy is zero whereas other rules are not deterministic in the sense that their entropy is not zero. Roughly, classical systems are those stochastic systems in which every rule application is deterministic and the rest will display behavior which can be truly called stochastic. After making this precise with the formalism of Markov categories, we explore a couple of concrete examples and sketch their potential relevance to the property of causal invariance.

Introduction to Markov Categories

Markov categories (Fritz, 2020) offer a way to reason synthetically about systems which exhibit features of probability and statistics, including traditional Markov kernels and other processes which may not initially seem to be stochastic. Guided by the category Stoch whose objects are measurable spaces and whose morphisms are measurable Markov kernels, we define a Markov category below.

◼

Definitions

A Markov category is a symmetric semicartesian monoidal category supplying commutative internal comonoids which are coherent with the symmetric cartesian monoidal product; for a formal definition, see (Fritz, 2020). Given an object , the comultiplication X→X is called and the counit is called . In general, a morphism is viewed as a generalized "Markov kernel". A distribution on X is a morphism of the form , and a joint distribution is a morphism of the form I→X; Its marginal distributions are its compositions with the projection morphisms.

This enables a definition for a morphism to be deterministic, and enriching the homsets with divergences leads to a definition of entropy and many related notions from information theory (Perrone, 2023). These definitions form a synthetic model of probability that captures the central concepts from the analytic model of traditional Markov kernels, an example of which is shown as an image above.

(C,⊗,I)

X∈C

⊗X

copy

X

X→I

del

X

I→X

⊗Y

This enables a definition for a morphism to be deterministic, and enriching the homsets with divergences leads to a definition of entropy and many related notions from information theory (Perrone, 2023). These definitions form a synthetic model of probability that captures the central concepts from the analytic model of traditional Markov kernels, an example of which is shown as an image above.

Examples

Examples

Trivial Examples

Trivial Examples

Any symmetric Cartesian monoidal category becomes a trivial example of a Markov category since the commutative comonoid structures are uniquely specified by the symmetric Cartesian monoidal product. This uniqueness leads to a one-to-one correspondence between joint distributions and their marginal distributions.

As an example, consider the symmetric Cartesian monoidal category FinSet in which objects are finite sets, morphisms are functions, the monoidal product is the Cartesian product, the copy comultiplications are diagonal maps, and observe that the counits are uniquely specified by the universal property of terminal objects. This motivates the terminology of and .

As an example, consider the symmetric Cartesian monoidal category FinSet in which objects are finite sets, morphisms are functions, the monoidal product is the Cartesian product, the copy comultiplications are diagonal maps, and observe that the counits are uniquely specified by the universal property of terminal objects. This motivates the terminology of

copy

del

Finite Stochastic Matrices

Finite Stochastic Matrices

More examples of Markov categories arise from symmetric monoidal categories in which objects are finite sets and a morphism is a matrix .The most illuminating example of a Markov category is FinStoch, in which a morphism is a stochastic matrix , i.e. an assignment of a probability distribution over for each element . This be interpreted as the probability that a channel produces an output upon an input . One can see that FinStoch is not Cartesian by noting that a joint distribution on can correspond to more than one pair of marginal distributions on and , a phenomenon which occurs for example with multivariate Gaussian distributions. That relaxation from Cartesian to semicartesian produces non-trivial statistics. This example can be used to explain many of the basic definitions arising in the study of Markov categories, and this example lends a probabilistic interpretation to the structure arising in other Markov categories. In particular, the morphisms of FinStoch can be interpreted as Markov transition kernels from one state to another, whose output state depends probabilistically on the input state, and so the morphisms of another Markov category have some interpretation as Markov-type transition kernels (in which Markov-type is used informally by analogy). The Markov category FinSet can be viewed as a subcategory of FinStoch in which the stochastic matrix for each has a for exactly one and a in every other entry. A related example is FinSetMulti in which the stochastic matrix has a for at least one and a in every other entry. They are multi-valued functions in which the matrix , which could be interpreted as a "conditional probability" in FinStoch, can be interpreted as a "conditional necessity" in FinSet and a "conditional possibility" in FinSetMulti.

f:X->Y

()

f

x,y

xϵX,yϵY

f:X->Y

()

f

x,y

f(x)

Y

xϵX

f(y|x)

y

x

X⊗Y

X

Y

f(y|x)

x

1

y

f(y|x)

1

y

f(y|x)

Hypergraph categories

Hypergraph categories

Another class of examples of Markov categories comes from hypergraph categories, examples of which are abundant across quantum information theory, categorical quantum mechanics and the ZX-calculus, and the Wolfram Physics Project.

Markovian Interpretation of Multiway Systems

Markovian Interpretation of Multiway Systems

The morphisms of FinSet can be viewed as Markov-type transition kernels in which the system progresses in one definite way. Similarly, a morphism in FinSetMulti can be viewed as Markov-type transition kernels in which the system progresses from to simultaneously along every entry of equal to 1. Thus, they are akin to multiway systems. While the interpretation of multiway system substitutions was already of this flavor, the theory of Markov categories supplements multiway systems with new synthetic notions of probability, statistic, and information theory. In particular, the early results for Markov categories due to (Fritz, 2020) were about sufficient statistics and minimal sufficiency; this could be useful for establishing a proper inference theory for multiway systems and answer questions like how many queries are needed to efficiently estimate the dimensionality of a hypergraph which limits to something like a manifold with a fixed fractional dimension. Thus, an investigation of FinSetMulti would be useful for the study of Markov categories and for the study of multiway systems.

f:X->Y

X

Y

()

f

x,y

String Substitution Systems

String Substitution Systems

A pedagogical example is the Markov category whose objects are strings over a given alphabet, morphisms are applications of string substitution rules, is concatenation with itself, the terminal object is the empty string, and the deletion counit is uniquely specified. As an example, a string substitution system can consist of strings with two letters and two rules: a rule which replaces every instance of with an instance of and a rule which replaces every instance of with an instance of . Given an initial string, a multiway system is formed by applying these rules.

cop

y

X

A,B

AAA

A

AA

AA->B

AA

B

Determinism

Determinism

It is reasonable to believe, such as in a deterministic system, that a morphism should commute with the comultiplication operations so that where and ; morphisms with this property are called deterministic. It is common for a function of a random variable to do something deterministic in this sense, such as square the random variable of ; indeed these transformations are deterministic and this example agrees with our intuition that the randomness comes from the distribution and not from the squaring. Another function may add to the randomness of X by adding a number which is the result of rolling a die. The question is whether two copies of should result in the same value of the die, and it is clear that each application of should introduce a fresh source of randomness. In the equation of determinism, the morphism introduces randomness once on the left-hand side but twice on the right-hand side; intuitively, they should not be equal exactly when introduces randomness and they should be equal when introduces no randomness (and is thus deterministic). Determinism or a related concept may provide a fruitful way to think about the causal invariance property of abstract rewriting systems.

f:X->Y

L=R

L=cop◦f

y

Y

R=(f⊗f)

f

X

g:X→Y

g

g

Example

Example

Consider string substitution system over the alphabet consisting of two rules: and . One could easily check that the first rule commutes with the copy comultiplication operations. To see why the second rule does not, observe that and that . Whether an application of a rule is deterministic depends on the rule and on the state to which it is applied; since it may matter whether you copy before or after applying the second rule, morphisms corresponding to the second rule need not be deterministic and is not when applied to the input state . There may be an equivalent formulation of determinism based on structural properties of the multiway causal graph which are local to the application of the morphism in equation.

{A,B}

A→AA

=AA→B

copy◦(A)=AA

◦copy(A)=(AA)=B

When the morphism on the left-hand side is not exactly equal to the morphism on the right-hand side, one may ask: by how much does differ from L? And what exactly would this quantity represent?

R

Divergence

Divergence

A common mathematical structure for such problems is the metric space for which the metric satisfies non-negativity , positivity if and only if the points are distinct, symmetry , and the triangle inequality . A divergence is like a metric except its values are in and it only satisfies non-negativity and positivity; that is, it is not required to satisfy symmetry or the triangle inequality. Nevertheless, triangle-like inequalities have been shown to arise from compositions and other operations. These divergences can be built from Shannon entropy, Kullback-Leibler divergence, divergences for strings like the Hamming distance, inner products of vector spaces, and divergences for comparing similarities of graphs.

In order to quantify the degree to which a morphism is (non) deterministic, a Markov category is enriched (Perrone, 2023) with a divergence D(x,y) on each homset so that morphisms with common endpoints can be compared, resulting in non-negative quantities , denoted , and . By positivity, the divergence of a pair of morphisms is zero exactly when the two are equal. Then, a morphism can be equivalently defined to be deterministic when the quantity ()=D(copy◦||(⊗)◦copy) is equal to zero. This quantity () is called the entropy of , and it corresponds exactly to the existing notions of entropy in physics and information theory, as established by (Perrone, 2023). While it is possible to define a divergence that depends only on the domain and co-domain of the morphisms but not on the details of the morphisms, this would be trivial as divergences are defined on hom-sets (i. e. between pairs of morphisms which share the same domain and co-domain).

(M,d)

d:MM→R

d(x,y)≥0

d(x,y)>0

d(x,y)=d(y,x)

d(x,z)≤d(x,y)+d(y,z)

(M,d)

[0,∞]

In order to quantify the degree to which a morphism is (non) deterministic, a Markov category is enriched (Perrone, 2023) with a divergence D(x,y) on each homset

C(x,y)

,g

D(,g)

D(||g)

D(g||)

H

D

H

D

Statistical Mechanics in the Wolfram Physics Project

Statistical Mechanics in the Wolfram Physics Project

There is active work on Statistical Mechanics in the Wolfram Physics Project, centered largely around estimating the large-scale structure of well-behaved hypergraphs, e.g. that have a consistent dimension or curvature; this includes work on volume entropy. In particular, the concept of volume entropy, which is fundamentally about a fixed hypergraph, has been studied in the context of multiway systems (Zeschke, 2020).

Future Work

Future Work

The concept of entropy relevant for Markov categories is fundamentally about distributions and Markov kernels.

It would be interesting to explore relevant analogies with the fundamental laws of thermodynamics, wherever they are applicable. There is much analytic work by (Perrone, 2023) on how the divergence is affected by various operations, such as by post-composition of the arguments with a deterministic morphism. It would be interesting to explore what kinds of behavior hold for all or for a broad range of hypergraph rewriting systems, e. g. sampled at random, an exploration which is particularly suitable for implementation with the MultiwaySystem resource from the Wolfram Function Repository.

Lastly, this line of research into the entropy of multiway systems may be useful for quantitatively measuring the extent to which the limiting behavior of a given multiway system is deterministic. A related idea was presented in (Wolfram, 2020) with respect to a concept of entropy which was presented only in informal analogy with one in cellular automata. In the future, this statistical test may bear implications for causal invariance.

It would be interesting to explore relevant analogies with the fundamental laws of thermodynamics, wherever they are applicable. There is much analytic work by (Perrone, 2023) on how the divergence is affected by various operations, such as by post-composition of the arguments with a deterministic morphism. It would be interesting to explore what kinds of behavior hold for all or for a broad range of hypergraph rewriting systems, e. g. sampled at random, an exploration which is particularly suitable for implementation with the MultiwaySystem resource from the Wolfram Function Repository.

Lastly, this line of research into the entropy of multiway systems may be useful for quantitatively measuring the extent to which the limiting behavior of a given multiway system is deterministic. A related idea was presented in (Wolfram, 2020) with respect to a concept of entropy which was presented only in informal analogy with one in cellular automata. In the future, this statistical test may bear implications for causal invariance.

Acknowledgements

◼

My mentors, Xerxes D. Arsiwalla and Eric James Parfitt, at the Wolfram Science Winter School for their guidance

◼

Rany Tith for helpful discussions on Markov categories and time

◼

Stephen Wolfram and everybody who helped make the Wolfram Science Winter School a success!

References